This the multi-page printable view of this section. Click here to print.

Administer a Cluster

- 1: Administration with kubeadm

- 1.1: Certificate Management with kubeadm

- 1.2: Configuring a cgroup driver

- 1.3: Upgrading kubeadm clusters

- 1.4: Adding Windows nodes

- 1.5: Upgrading Windows nodes

- 2: Migrating from dockershim

- 2.1: Changing the Container Runtime on a Node from Docker Engine to containerd

- 2.2: Find Out What Container Runtime is Used on a Node

- 2.3: Check whether Dockershim deprecation affects you

- 2.4: Migrating telemetry and security agents from dockershim

- 3: Certificates

- 4: Manage Memory, CPU, and API Resources

- 4.1: Configure Default Memory Requests and Limits for a Namespace

- 4.2: Configure Default CPU Requests and Limits for a Namespace

- 4.3: Configure Minimum and Maximum Memory Constraints for a Namespace

- 4.4: Configure Minimum and Maximum CPU Constraints for a Namespace

- 4.5: Configure Memory and CPU Quotas for a Namespace

- 4.6: Configure a Pod Quota for a Namespace

- 5: Install a Network Policy Provider

- 5.1: Use Antrea for NetworkPolicy

- 5.2: Use Calico for NetworkPolicy

- 5.3: Use Cilium for NetworkPolicy

- 5.4: Use Kube-router for NetworkPolicy

- 5.5: Romana for NetworkPolicy

- 5.6: Weave Net for NetworkPolicy

- 6: Access Clusters Using the Kubernetes API

- 7: Advertise Extended Resources for a Node

- 8: Autoscale the DNS Service in a Cluster

- 9: Change the default StorageClass

- 10: Change the Reclaim Policy of a PersistentVolume

- 11: Cloud Controller Manager Administration

- 12: Configure Quotas for API Objects

- 13: Control CPU Management Policies on the Node

- 14: Control Topology Management Policies on a node

- 15: Customizing DNS Service

- 16: Debugging DNS Resolution

- 17: Declare Network Policy

- 18: Developing Cloud Controller Manager

- 19: Enable Or Disable A Kubernetes API

- 20: Enabling Service Topology

- 21: Encrypting Secret Data at Rest

- 22: Guaranteed Scheduling For Critical Add-On Pods

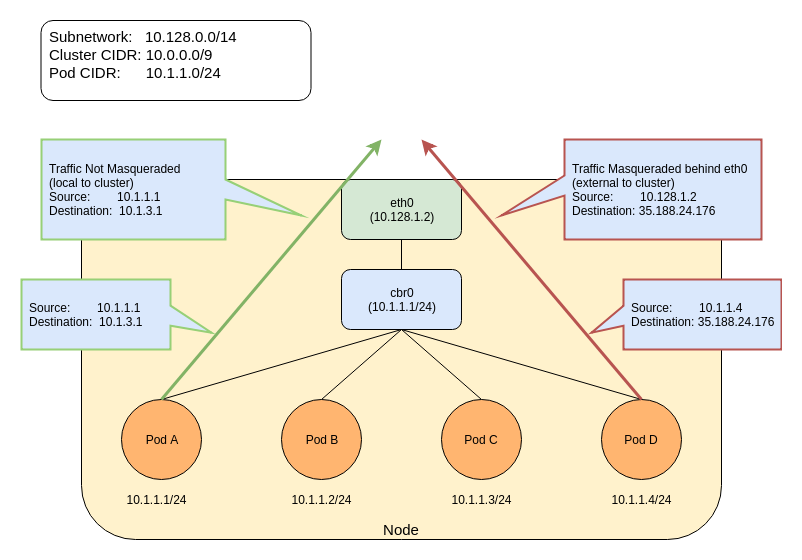

- 23: IP Masquerade Agent User Guide

- 24: Limit Storage Consumption

- 25: Migrate Replicated Control Plane To Use Cloud Controller Manager

- 26: Namespaces Walkthrough

- 27: Operating etcd clusters for Kubernetes

- 28: Reconfigure a Node's Kubelet in a Live Cluster

- 29: Reserve Compute Resources for System Daemons

- 30: Running Kubernetes Node Components as a Non-root User

- 31: Safely Drain a Node

- 32: Securing a Cluster

- 33: Set Kubelet parameters via a config file

- 34: Share a Cluster with Namespaces

- 35: Upgrade A Cluster

- 36: Use Cascading Deletion in a Cluster

- 37: Using a KMS provider for data encryption

- 38: Using CoreDNS for Service Discovery

- 39: Using NodeLocal DNSCache in Kubernetes clusters

- 40: Using sysctls in a Kubernetes Cluster

- 41: Utilizing the NUMA-aware Memory Manager

1 - Administration with kubeadm

1.1 - Certificate Management with kubeadm

Kubernetes v1.15 [stable]

Client certificates generated by kubeadm expire after 1 year. This page explains how to manage certificate renewals with kubeadm. It also covers other tasks related to kubeadm certificate management.

Before you begin

You should be familiar with PKI certificates and requirements in Kubernetes.

Using custom certificates

By default, kubeadm generates all the certificates needed for a cluster to run. You can override this behavior by providing your own certificates.

To do so, you must place them in whatever directory is specified by the

--cert-dir flag or the certificatesDir field of kubeadm's ClusterConfiguration.

By default this is /etc/kubernetes/pki.

If a given certificate and private key pair exists before running kubeadm init,

kubeadm does not overwrite them. This means you can, for example, copy an existing

CA into /etc/kubernetes/pki/ca.crt and /etc/kubernetes/pki/ca.key,

and kubeadm will use this CA for signing the rest of the certificates.

External CA mode

It is also possible to provide only the ca.crt file and not the

ca.key file (this is only available for the root CA file, not other cert pairs).

If all other certificates and kubeconfig files are in place, kubeadm recognizes

this condition and activates the "External CA" mode. kubeadm will proceed without the

CA key on disk.

Instead, run the controller-manager standalone with --controllers=csrsigner and

point to the CA certificate and key.

PKI certificates and requirements includes guidance on setting up a cluster to use an external CA.

Check certificate expiration

You can use the check-expiration subcommand to check when certificates expire:

kubeadm certs check-expiration

The output is similar to this:

CERTIFICATE EXPIRES RESIDUAL TIME CERTIFICATE AUTHORITY EXTERNALLY MANAGED

admin.conf Dec 30, 2020 23:36 UTC 364d no

apiserver Dec 30, 2020 23:36 UTC 364d ca no

apiserver-etcd-client Dec 30, 2020 23:36 UTC 364d etcd-ca no

apiserver-kubelet-client Dec 30, 2020 23:36 UTC 364d ca no

controller-manager.conf Dec 30, 2020 23:36 UTC 364d no

etcd-healthcheck-client Dec 30, 2020 23:36 UTC 364d etcd-ca no

etcd-peer Dec 30, 2020 23:36 UTC 364d etcd-ca no

etcd-server Dec 30, 2020 23:36 UTC 364d etcd-ca no

front-proxy-client Dec 30, 2020 23:36 UTC 364d front-proxy-ca no

scheduler.conf Dec 30, 2020 23:36 UTC 364d no

CERTIFICATE AUTHORITY EXPIRES RESIDUAL TIME EXTERNALLY MANAGED

ca Dec 28, 2029 23:36 UTC 9y no

etcd-ca Dec 28, 2029 23:36 UTC 9y no

front-proxy-ca Dec 28, 2029 23:36 UTC 9y no

The command shows expiration/residual time for the client certificates in the /etc/kubernetes/pki folder and for the client certificate embedded in the KUBECONFIG files used by kubeadm (admin.conf, controller-manager.conf and scheduler.conf).

Additionally, kubeadm informs the user if the certificate is externally managed; in this case, the user should take care of managing certificate renewal manually/using other tools.

kubeadm cannot manage certificates signed by an external CA.

kubelet.conf is not included in the list above because kubeadm configures kubelet

for automatic certificate renewal

with rotatable certificates under /var/lib/kubelet/pki.

To repair an expired kubelet client certificate see

Kubelet client certificate rotation fails.

On nodes created with kubeadm init, prior to kubeadm version 1.17, there is a

bug where you manually have to modify the contents of kubelet.conf. After kubeadm init finishes, you should update kubelet.conf to point to the

rotated kubelet client certificates, by replacing client-certificate-data and client-key-data with:

client-certificate: /var/lib/kubelet/pki/kubelet-client-current.pem

client-key: /var/lib/kubelet/pki/kubelet-client-current.pem

Automatic certificate renewal

kubeadm renews all the certificates during control plane upgrade.

This feature is designed for addressing the simplest use cases; if you don't have specific requirements on certificate renewal and perform Kubernetes version upgrades regularly (less than 1 year in between each upgrade), kubeadm will take care of keeping your cluster up to date and reasonably secure.

If you have more complex requirements for certificate renewal, you can opt out from the default behavior by passing --certificate-renewal=false to kubeadm upgrade apply or to kubeadm upgrade node.

--certificate-renewal is false for the kubeadm upgrade node

command. In that case, you should explicitly set --certificate-renewal=true.

Manual certificate renewal

You can renew your certificates manually at any time with the kubeadm certs renew command.

This command performs the renewal using CA (or front-proxy-CA) certificate and key stored in /etc/kubernetes/pki.

After running the command you should restart the control plane Pods. This is required since

dynamic certificate reload is currently not supported for all components and certificates.

Static Pods are managed by the local kubelet

and not by the API Server, thus kubectl cannot be used to delete and restart them.

To restart a static Pod you can temporarily remove its manifest file from /etc/kubernetes/manifests/

and wait for 20 seconds (see the fileCheckFrequency value in KubeletConfiguration struct.

The kubelet will terminate the Pod if it's no longer in the manifest directory.

You can then move the file back and after another fileCheckFrequency period, the kubelet will recreate

the Pod and the certificate renewal for the component can complete.

certs renew uses the existing certificates as the authoritative source for attributes (Common Name, Organization, SAN, etc.) instead of the kubeadm-config ConfigMap. It is strongly recommended to keep them both in sync.

kubeadm certs renew provides the following options:

The Kubernetes certificates normally reach their expiration date after one year.

-

--csr-onlycan be used to renew certificates with an external CA by generating certificate signing requests (without actually renewing certificates in place); see next paragraph for more information. -

It's also possible to renew a single certificate instead of all.

Renew certificates with the Kubernetes certificates API

This section provides more details about how to execute manual certificate renewal using the Kubernetes certificates API.

Set up a signer

The Kubernetes Certificate Authority does not work out of the box. You can configure an external signer such as cert-manager, or you can use the built-in signer.

The built-in signer is part of kube-controller-manager.

To activate the built-in signer, you must pass the --cluster-signing-cert-file and --cluster-signing-key-file flags.

If you're creating a new cluster, you can use a kubeadm configuration file:

apiVersion: kubeadm.k8s.io/v1beta3

kind: ClusterConfiguration

controllerManager:

extraArgs:

cluster-signing-cert-file: /etc/kubernetes/pki/ca.crt

cluster-signing-key-file: /etc/kubernetes/pki/ca.key

Create certificate signing requests (CSR)

See Create CertificateSigningRequest for creating CSRs with the Kubernetes API.

Renew certificates with external CA

This section provide more details about how to execute manual certificate renewal using an external CA.

To better integrate with external CAs, kubeadm can also produce certificate signing requests (CSRs). A CSR represents a request to a CA for a signed certificate for a client. In kubeadm terms, any certificate that would normally be signed by an on-disk CA can be produced as a CSR instead. A CA, however, cannot be produced as a CSR.

Create certificate signing requests (CSR)

You can create certificate signing requests with kubeadm certs renew --csr-only.

Both the CSR and the accompanying private key are given in the output.

You can pass in a directory with --csr-dir to output the CSRs to the specified location.

If --csr-dir is not specified, the default certificate directory (/etc/kubernetes/pki) is used.

Certificates can be renewed with kubeadm certs renew --csr-only.

As with kubeadm init, an output directory can be specified with the --csr-dir flag.

A CSR contains a certificate's name, domains, and IPs, but it does not specify usages. It is the responsibility of the CA to specify the correct cert usages when issuing a certificate.

- In

opensslthis is done with theopenssl cacommand. - In

cfsslyou specify usages in the config file.

After a certificate is signed using your preferred method, the certificate and the private key must be copied to the PKI directory (by default /etc/kubernetes/pki).

Certificate authority (CA) rotation

Kubeadm does not support rotation or replacement of CA certificates out of the box.

For more information about manual rotation or replacement of CA, see manual rotation of CA certificates.

Enabling signed kubelet serving certificates

By default the kubelet serving certificate deployed by kubeadm is self-signed. This means a connection from external services like the metrics-server to a kubelet cannot be secured with TLS.

To configure the kubelets in a new kubeadm cluster to obtain properly signed serving

certificates you must pass the following minimal configuration to kubeadm init:

apiVersion: kubeadm.k8s.io/v1beta3

kind: ClusterConfiguration

---

apiVersion: kubelet.config.k8s.io/v1beta1

kind: KubeletConfiguration

serverTLSBootstrap: true

If you have already created the cluster you must adapt it by doing the following:

- Find and edit the

kubelet-config-1.23ConfigMap in thekube-systemnamespace. In that ConfigMap, thekubeletkey has a KubeletConfiguration document as its value. Edit the KubeletConfiguration document to setserverTLSBootstrap: true. - On each node, add the

serverTLSBootstrap: truefield in/var/lib/kubelet/config.yamland restart the kubelet withsystemctl restart kubelet

The field serverTLSBootstrap: true will enable the bootstrap of kubelet serving

certificates by requesting them from the certificates.k8s.io API. One known limitation

is that the CSRs (Certificate Signing Requests) for these certificates cannot be automatically

approved by the default signer in the kube-controller-manager -

kubernetes.io/kubelet-serving.

This will require action from the user or a third party controller.

These CSRs can be viewed using:

kubectl get csr

NAME AGE SIGNERNAME REQUESTOR CONDITION

csr-9wvgt 112s kubernetes.io/kubelet-serving system:node:worker-1 Pending

csr-lz97v 1m58s kubernetes.io/kubelet-serving system:node:control-plane-1 Pending

To approve them you can do the following:

kubectl certificate approve <CSR-name>

By default, these serving certificate will expire after one year. Kubeadm sets the

KubeletConfiguration field rotateCertificates to true, which means that close

to expiration a new set of CSRs for the serving certificates will be created and must

be approved to complete the rotation. To understand more see

Certificate Rotation.

If you are looking for a solution for automatic approval of these CSRs it is recommended that you contact your cloud provider and ask if they have a CSR signer that verifies the node identity with an out of band mechanism.

Third party custom controllers can be used:

Such a controller is not a secure mechanism unless it not only verifies the CommonName in the CSR but also verifies the requested IPs and domain names. This would prevent a malicious actor that has access to a kubelet client certificate to create CSRs requesting serving certificates for any IP or domain name.

Generating kubeconfig files for additional users

During cluster creation, kubeadm signs the certificate in the admin.conf to have

Subject: O = system:masters, CN = kubernetes-admin.

system:masters

is a break-glass, super user group that bypasses the authorization layer (e.g. RBAC).

Sharing the admin.conf with additional users is not recommended!

Instead, you can use the kubeadm kubeconfig user

command to generate kubeconfig files for additional users.

The command accepts a mixture of command line flags and

kubeadm configuration options.

The generated kubeconfig will be written to stdout and can be piped to a file

using kubeadm kubeconfig user ... > somefile.conf.

Example configuration file that can be used with --config:

# example.yaml

apiVersion: kubeadm.k8s.io/v1beta3

kind: ClusterConfiguration

# Will be used as the target "cluster" in the kubeconfig

clusterName: "kubernetes"

# Will be used as the "server" (IP or DNS name) of this cluster in the kubeconfig

controlPlaneEndpoint: "some-dns-address:6443"

# The cluster CA key and certificate will be loaded from this local directory

certificatesDir: "/etc/kubernetes/pki"

Make sure that these settings match the desired target cluster settings. To see the settings of an existing cluster use:

kubectl get cm kubeadm-config -n kube-system -o=jsonpath="{.data.ClusterConfiguration}"

The following example will generate a kubeconfig file with credentials valid for 24 hours

for a new user johndoe that is part of the appdevs group:

kubeadm kubeconfig user --config example.yaml --org appdevs --client-name johndoe --validity-period 24h

The following example will generate a kubeconfig file with administrator credentials valid for 1 week:

kubeadm kubeconfig user --config example.yaml --client-name admin --validity-period 168h

1.2 - Configuring a cgroup driver

This page explains how to configure the kubelet cgroup driver to match the container runtime cgroup driver for kubeadm clusters.

Before you begin

You should be familiar with the Kubernetes container runtime requirements.

Configuring the container runtime cgroup driver

The Container runtimes page

explains that the systemd driver is recommended for kubeadm based setups instead

of the cgroupfs driver, because kubeadm manages the kubelet as a systemd service.

The page also provides details on how to setup a number of different container runtimes with the

systemd driver by default.

Configuring the kubelet cgroup driver

kubeadm allows you to pass a KubeletConfiguration structure during kubeadm init.

This KubeletConfiguration can include the cgroupDriver field which controls the cgroup

driver of the kubelet.

cgroupDriver field under KubeletConfiguration,

kubeadm will default it to systemd.

A minimal example of configuring the field explicitly:

# kubeadm-config.yaml

kind: ClusterConfiguration

apiVersion: kubeadm.k8s.io/v1beta3

kubernetesVersion: v1.21.0

---

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

cgroupDriver: systemd

Such a configuration file can then be passed to the kubeadm command:

kubeadm init --config kubeadm-config.yaml

Kubeadm uses the same KubeletConfiguration for all nodes in the cluster.

The KubeletConfiguration is stored in a ConfigMap

object under the kube-system namespace.

Executing the sub commands init, join and upgrade would result in kubeadm

writing the KubeletConfiguration as a file under /var/lib/kubelet/config.yaml

and passing it to the local node kubelet.

Using the cgroupfs driver

As this guide explains using the cgroupfs driver with kubeadm is not recommended.

To continue using cgroupfs and to prevent kubeadm upgrade from modifying the

KubeletConfiguration cgroup driver on existing setups, you must be explicit

about its value. This applies to a case where you do not wish future versions

of kubeadm to apply the systemd driver by default.

See the below section on "Modify the kubelet ConfigMap" for details on how to be explicit about the value.

If you wish to configure a container runtime to use the cgroupfs driver,

you must refer to the documentation of the container runtime of your choice.

Migrating to the systemd driver

To change the cgroup driver of an existing kubeadm cluster to systemd in-place,

a similar procedure to a kubelet upgrade is required. This must include both

steps outlined below.

systemd driver. This requires executing only the first step below

before joining the new nodes and ensuring the workloads can safely move to the new

nodes before deleting the old nodes.

Modify the kubelet ConfigMap

-

Find the kubelet ConfigMap name using

kubectl get cm -n kube-system | grep kubelet-config. -

Call

kubectl edit cm kubelet-config-x.yy -n kube-system(replacex.yywith the Kubernetes version). -

Either modify the existing

cgroupDrivervalue or add a new field that looks like this:cgroupDriver: systemdThis field must be present under the

kubelet:section of the ConfigMap.

Update the cgroup driver on all nodes

For each node in the cluster:

- Drain the node using

kubectl drain <node-name> --ignore-daemonsets - Stop the kubelet using

systemctl stop kubelet - Stop the container runtime

- Modify the container runtime cgroup driver to

systemd - Set

cgroupDriver: systemdin/var/lib/kubelet/config.yaml - Start the container runtime

- Start the kubelet using

systemctl start kubelet - Uncordon the node using

kubectl uncordon <node-name>

Execute these steps on nodes one at a time to ensure workloads have sufficient time to schedule on different nodes.

Once the process is complete ensure that all nodes and workloads are healthy.

1.3 - Upgrading kubeadm clusters

This page explains how to upgrade a Kubernetes cluster created with kubeadm from version

1.22.x to version 1.23.x, and from version

1.23.x to 1.23.y (where y > x). Skipping MINOR versions

when upgrading is unsupported.

To see information about upgrading clusters created using older versions of kubeadm, please refer to following pages instead:

- Upgrading a kubeadm cluster from 1.21 to 1.22

- Upgrading a kubeadm cluster from 1.20 to 1.21

- Upgrading a kubeadm cluster from 1.19 to 1.20

- Upgrading a kubeadm cluster from 1.18 to 1.19

The upgrade workflow at high level is the following:

- Upgrade a primary control plane node.

- Upgrade additional control plane nodes.

- Upgrade worker nodes.

Before you begin

- Make sure you read the release notes carefully.

- The cluster should use a static control plane and etcd pods or external etcd.

- Make sure to back up any important components, such as app-level state stored in a database.

kubeadm upgradedoes not touch your workloads, only components internal to Kubernetes, but backups are always a best practice. - Swap must be disabled.

Additional information

- The instructions below outline when to drain each node during the upgrade process. If you are performing a minor version upgrade for any kubelet, you must first drain the node (or nodes) that you are upgrading. In the case of control plane nodes, they could be running CoreDNS Pods or other critical workloads. For more information see Draining nodes.

- All containers are restarted after upgrade, because the container spec hash value is changed.

- To verify that the kubelet service has successfully restarted after the kubelet has been upgraded, you can execute

systemctl status kubeletor view the service logs withjournalctl -xeu kubelet.

Determine which version to upgrade to

Find the latest patch release for Kubernetes 1.23 using the OS package manager:

apt update

apt-cache madison kubeadm

# find the latest 1.23 version in the list

# it should look like 1.23.x-00, where x is the latest patch

yum list --showduplicates kubeadm --disableexcludes=kubernetes

# find the latest 1.23 version in the list

# it should look like 1.23.x-0, where x is the latest patch

Upgrading control plane nodes

The upgrade procedure on control plane nodes should be executed one node at a time.

Pick a control plane node that you wish to upgrade first. It must have the /etc/kubernetes/admin.conf file.

Call "kubeadm upgrade"

For the first control plane node

- Upgrade kubeadm:

# replace x in 1.23.x-00 with the latest patch version

apt-mark unhold kubeadm && \

apt-get update && apt-get install -y kubeadm=1.23.x-00 && \

apt-mark hold kubeadm

# replace x in 1.23.x-0 with the latest patch version

yum install -y kubeadm-1.23.x-0 --disableexcludes=kubernetes

-

Verify that the download works and has the expected version:

kubeadm version -

Verify the upgrade plan:

kubeadm upgrade planThis command checks that your cluster can be upgraded, and fetches the versions you can upgrade to. It also shows a table with the component config version states.

kubeadm upgrade also automatically renews the certificates that it manages on this node.

To opt-out of certificate renewal the flag --certificate-renewal=false can be used.

For more information see the certificate management guide.

kubeadm upgrade plan shows any component configs that require manual upgrade, users must provide

a config file with replacement configs to kubeadm upgrade apply via the --config command line flag.

Failing to do so will cause kubeadm upgrade apply to exit with an error and not perform an upgrade.

-

Choose a version to upgrade to, and run the appropriate command. For example:

# replace x with the patch version you picked for this upgrade sudo kubeadm upgrade apply v1.23.xOnce the command finishes you should see:

[upgrade/successful] SUCCESS! Your cluster was upgraded to "v1.23.x". Enjoy! [upgrade/kubelet] Now that your control plane is upgraded, please proceed with upgrading your kubelets if you haven't already done so. -

Manually upgrade your CNI provider plugin.

Your Container Network Interface (CNI) provider may have its own upgrade instructions to follow. Check the addons page to find your CNI provider and see whether additional upgrade steps are required.

This step is not required on additional control plane nodes if the CNI provider runs as a DaemonSet.

For the other control plane nodes

Same as the first control plane node but use:

sudo kubeadm upgrade node

instead of:

sudo kubeadm upgrade apply

Also calling kubeadm upgrade plan and upgrading the CNI provider plugin is no longer needed.

Drain the node

-

Prepare the node for maintenance by marking it unschedulable and evicting the workloads:

# replace <node-to-drain> with the name of your node you are draining kubectl drain <node-to-drain> --ignore-daemonsets

Upgrade kubelet and kubectl

- Upgrade the kubelet and kubectl:

# replace x in 1.23.x-00 with the latest patch version

apt-mark unhold kubelet kubectl && \

apt-get update && apt-get install -y kubelet=1.23.x-00 kubectl=1.23.x-00 && \

apt-mark hold kubelet kubectl

# replace x in 1.23.x-0 with the latest patch version

yum install -y kubelet-1.23.x-0 kubectl-1.23.x-0 --disableexcludes=kubernetes

-

Restart the kubelet:

sudo systemctl daemon-reload sudo systemctl restart kubelet

Uncordon the node

-

Bring the node back online by marking it schedulable:

# replace <node-to-drain> with the name of your node kubectl uncordon <node-to-drain>

Upgrade worker nodes

The upgrade procedure on worker nodes should be executed one node at a time or few nodes at a time, without compromising the minimum required capacity for running your workloads.

Upgrade kubeadm

- Upgrade kubeadm:

# replace x in 1.23.x-00 with the latest patch version

apt-mark unhold kubeadm && \

apt-get update && apt-get install -y kubeadm=1.23.x-00 && \

apt-mark hold kubeadm

# replace x in 1.23.x-0 with the latest patch version

yum install -y kubeadm-1.23.x-0 --disableexcludes=kubernetes

Call "kubeadm upgrade"

-

For worker nodes this upgrades the local kubelet configuration:

sudo kubeadm upgrade node

Drain the node

-

Prepare the node for maintenance by marking it unschedulable and evicting the workloads:

# replace <node-to-drain> with the name of your node you are draining kubectl drain <node-to-drain> --ignore-daemonsets

Upgrade kubelet and kubectl

- Upgrade the kubelet and kubectl:

# replace x in 1.23.x-00 with the latest patch version

apt-mark unhold kubelet kubectl && \

apt-get update && apt-get install -y kubelet=1.23.x-00 kubectl=1.23.x-00 && \

apt-mark hold kubelet kubectl

# replace x in 1.23.x-0 with the latest patch version

yum install -y kubelet-1.23.x-0 kubectl-1.23.x-0 --disableexcludes=kubernetes

-

Restart the kubelet:

sudo systemctl daemon-reload sudo systemctl restart kubelet

Uncordon the node

-

Bring the node back online by marking it schedulable:

# replace <node-to-drain> with the name of your node kubectl uncordon <node-to-drain>

Verify the status of the cluster

After the kubelet is upgraded on all nodes verify that all nodes are available again by running the following command from anywhere kubectl can access the cluster:

kubectl get nodes

The STATUS column should show Ready for all your nodes, and the version number should be updated.

Recovering from a failure state

If kubeadm upgrade fails and does not roll back, for example because of an unexpected shutdown during execution, you can run kubeadm upgrade again.

This command is idempotent and eventually makes sure that the actual state is the desired state you declare.

To recover from a bad state, you can also run kubeadm upgrade apply --force without changing the version that your cluster is running.

During upgrade kubeadm writes the following backup folders under /etc/kubernetes/tmp:

kubeadm-backup-etcd-<date>-<time>kubeadm-backup-manifests-<date>-<time>

kubeadm-backup-etcd contains a backup of the local etcd member data for this control plane Node.

In case of an etcd upgrade failure and if the automatic rollback does not work, the contents of this folder

can be manually restored in /var/lib/etcd. In case external etcd is used this backup folder will be empty.

kubeadm-backup-manifests contains a backup of the static Pod manifest files for this control plane Node.

In case of a upgrade failure and if the automatic rollback does not work, the contents of this folder can be

manually restored in /etc/kubernetes/manifests. If for some reason there is no difference between a pre-upgrade

and post-upgrade manifest file for a certain component, a backup file for it will not be written.

How it works

kubeadm upgrade apply does the following:

- Checks that your cluster is in an upgradeable state:

- The API server is reachable

- All nodes are in the

Readystate - The control plane is healthy

- Enforces the version skew policies.

- Makes sure the control plane images are available or available to pull to the machine.

- Generates replacements and/or uses user supplied overwrites if component configs require version upgrades.

- Upgrades the control plane components or rollbacks if any of them fails to come up.

- Applies the new

CoreDNSandkube-proxymanifests and makes sure that all necessary RBAC rules are created. - Creates new certificate and key files of the API server and backs up old files if they're about to expire in 180 days.

kubeadm upgrade node does the following on additional control plane nodes:

- Fetches the kubeadm

ClusterConfigurationfrom the cluster. - Optionally backups the kube-apiserver certificate.

- Upgrades the static Pod manifests for the control plane components.

- Upgrades the kubelet configuration for this node.

kubeadm upgrade node does the following on worker nodes:

- Fetches the kubeadm

ClusterConfigurationfrom the cluster. - Upgrades the kubelet configuration for this node.

1.4 - Adding Windows nodes

Kubernetes v1.18 [beta]

You can use Kubernetes to run a mixture of Linux and Windows nodes, so you can mix Pods that run on Linux on with Pods that run on Windows. This page shows how to register Windows nodes to your cluster.

Before you begin

Your Kubernetes server must be at or later than version 1.17. To check the version, enterkubectl version.

-

Obtain a Windows Server 2019 license (or higher) in order to configure the Windows node that hosts Windows containers. If you are using VXLAN/Overlay networking you must have also have KB4489899 installed.

-

A Linux-based Kubernetes kubeadm cluster in which you have access to the control plane (see Creating a single control-plane cluster with kubeadm).

Objectives

- Register a Windows node to the cluster

- Configure networking so Pods and Services on Linux and Windows can communicate with each other

Getting Started: Adding a Windows Node to Your Cluster

Networking Configuration

Once you have a Linux-based Kubernetes control-plane node you are ready to choose a networking solution. This guide illustrates using Flannel in VXLAN mode for simplicity.

Configuring Flannel

-

Prepare Kubernetes control plane for Flannel

Some minor preparation is recommended on the Kubernetes control plane in our cluster. It is recommended to enable bridged IPv4 traffic to iptables chains when using Flannel. The following command must be run on all Linux nodes:

sudo sysctl net.bridge.bridge-nf-call-iptables=1 -

Download & configure Flannel for Linux

Download the most recent Flannel manifest:

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.ymlModify the

net-conf.jsonsection of the flannel manifest in order to set the VNI to 4096 and the Port to 4789. It should look as follows:net-conf.json: | { "Network": "10.244.0.0/16", "Backend": { "Type": "vxlan", "VNI": 4096, "Port": 4789 } }Note: The VNI must be set to 4096 and port 4789 for Flannel on Linux to interoperate with Flannel on Windows. See the VXLAN documentation. for an explanation of these fields.Note: To use L2Bridge/Host-gateway mode instead change the value ofTypeto"host-gw"and omitVNIandPort. -

Apply the Flannel manifest and validate

Let's apply the Flannel configuration:

kubectl apply -f kube-flannel.ymlAfter a few minutes, you should see all the pods as running if the Flannel pod network was deployed.

kubectl get pods -n kube-systemThe output should include the Linux flannel DaemonSet as running:

NAMESPACE NAME READY STATUS RESTARTS AGE ... kube-system kube-flannel-ds-54954 1/1 Running 0 1m -

Add Windows Flannel and kube-proxy DaemonSets

Now you can add Windows-compatible versions of Flannel and kube-proxy. In order to ensure that you get a compatible version of kube-proxy, you'll need to substitute the tag of the image. The following example shows usage for Kubernetes v1.23.0, but you should adjust the version for your own deployment.

curl -L https://github.com/kubernetes-sigs/sig-windows-tools/releases/latest/download/kube-proxy.yml | sed 's/VERSION/v1.23.0/g' | kubectl apply -f - kubectl apply -f https://github.com/kubernetes-sigs/sig-windows-tools/releases/latest/download/flannel-overlay.ymlNote: If you're using host-gateway use https://github.com/kubernetes-sigs/sig-windows-tools/releases/latest/download/flannel-host-gw.yml insteadNote:If you're using a different interface rather than Ethernet (i.e. "Ethernet0 2") on the Windows nodes, you have to modify the line:

wins cli process run --path /k/flannel/setup.exe --args "--mode=overlay --interface=Ethernet"in the

flannel-host-gw.ymlorflannel-overlay.ymlfile and specify your interface accordingly.# Example curl -L https://github.com/kubernetes-sigs/sig-windows-tools/releases/latest/download/flannel-overlay.yml | sed 's/Ethernet/Ethernet0 2/g' | kubectl apply -f -

Joining a Windows worker node

Install Docker EE

Install the Containers feature

Install-WindowsFeature -Name containers

Install Docker Instructions to do so are available at Install Docker Engine - Enterprise on Windows Servers.

Install wins, kubelet, and kubeadm

curl.exe -LO https://raw.githubusercontent.com/kubernetes-sigs/sig-windows-tools/master/kubeadm/scripts/PrepareNode.ps1

.\PrepareNode.ps1 -KubernetesVersion v1.23.0

Run kubeadm to join the node

Use the command that was given to you when you ran kubeadm init on a control plane host.

If you no longer have this command, or the token has expired, you can run kubeadm token create --print-join-command

(on a control plane host) to generate a new token and join command.

Install containerD

curl.exe -LO https://github.com/kubernetes-sigs/sig-windows-tools/releases/latest/download/Install-Containerd.ps1

.\Install-Containerd.ps1

To install a specific version of containerD specify the version with -ContainerDVersion.

# Example

.\Install-Containerd.ps1 -ContainerDVersion 1.4.1

If you're using a different interface rather than Ethernet (i.e. "Ethernet0 2") on the Windows nodes, specify the name with -netAdapterName.

# Example

.\Install-Containerd.ps1 -netAdapterName "Ethernet0 2"

Install wins, kubelet, and kubeadm

curl.exe -LO https://raw.githubusercontent.com/kubernetes-sigs/sig-windows-tools/master/kubeadm/scripts/PrepareNode.ps1

.\PrepareNode.ps1 -KubernetesVersion v1.23.0 -ContainerRuntime containerD

Run kubeadm to join the node

Use the command that was given to you when you ran kubeadm init on a control plane host.

If you no longer have this command, or the token has expired, you can run kubeadm token create --print-join-command

(on a control plane host) to generate a new token and join command.

--cri-socket "npipe:////./pipe/containerd-containerd" to the kubeadm call

Verifying your installation

You should now be able to view the Windows node in your cluster by running:

kubectl get nodes -o wide

If your new node is in the NotReady state it is likely because the flannel image is still downloading.

You can check the progress as before by checking on the flannel pods in the kube-system namespace:

kubectl -n kube-system get pods -l app=flannel

Once the flannel Pod is running, your node should enter the Ready state and then be available to handle workloads.

What's next

1.5 - Upgrading Windows nodes

Kubernetes v1.18 [beta]

This page explains how to upgrade a Windows node created with kubeadm.

Before you begin

You need to have a Kubernetes cluster, and the kubectl command-line tool must be configured to communicate with your cluster. It is recommended to run this tutorial on a cluster with at least two nodes that are not acting as control plane hosts. If you do not already have a cluster, you can create one by using minikube or you can use one of these Kubernetes playgrounds:

Your Kubernetes server must be at or later than version 1.17. To check the version, enterkubectl version.

- Familiarize yourself with the process for upgrading the rest of your kubeadm cluster. You will want to upgrade the control plane nodes before upgrading your Windows nodes.

Upgrading worker nodes

Upgrade kubeadm

-

From the Windows node, upgrade kubeadm:

# replace v1.23.0 with your desired version curl.exe -Lo C:\k\kubeadm.exe https://dl.k8s.io//bin/windows/amd64/kubeadm.exe

Drain the node

-

From a machine with access to the Kubernetes API, prepare the node for maintenance by marking it unschedulable and evicting the workloads:

# replace <node-to-drain> with the name of your node you are draining kubectl drain <node-to-drain> --ignore-daemonsetsYou should see output similar to this:

node/ip-172-31-85-18 cordoned node/ip-172-31-85-18 drained

Upgrade the kubelet configuration

-

From the Windows node, call the following command to sync new kubelet configuration:

kubeadm upgrade node

Upgrade kubelet

-

From the Windows node, upgrade and restart the kubelet:

stop-service kubelet curl.exe -Lo C:\k\kubelet.exe https://dl.k8s.io//bin/windows/amd64/kubelet.exe restart-service kubelet

Uncordon the node

-

From a machine with access to the Kubernetes API, bring the node back online by marking it schedulable:

# replace <node-to-drain> with the name of your node kubectl uncordon <node-to-drain>

Upgrade kube-proxy

-

From a machine with access to the Kubernetes API, run the following, again replacing v1.23.0 with your desired version:

curl -L https://github.com/kubernetes-sigs/sig-windows-tools/releases/latest/download/kube-proxy.yml | sed 's/VERSION/v1.23.0/g' | kubectl apply -f -

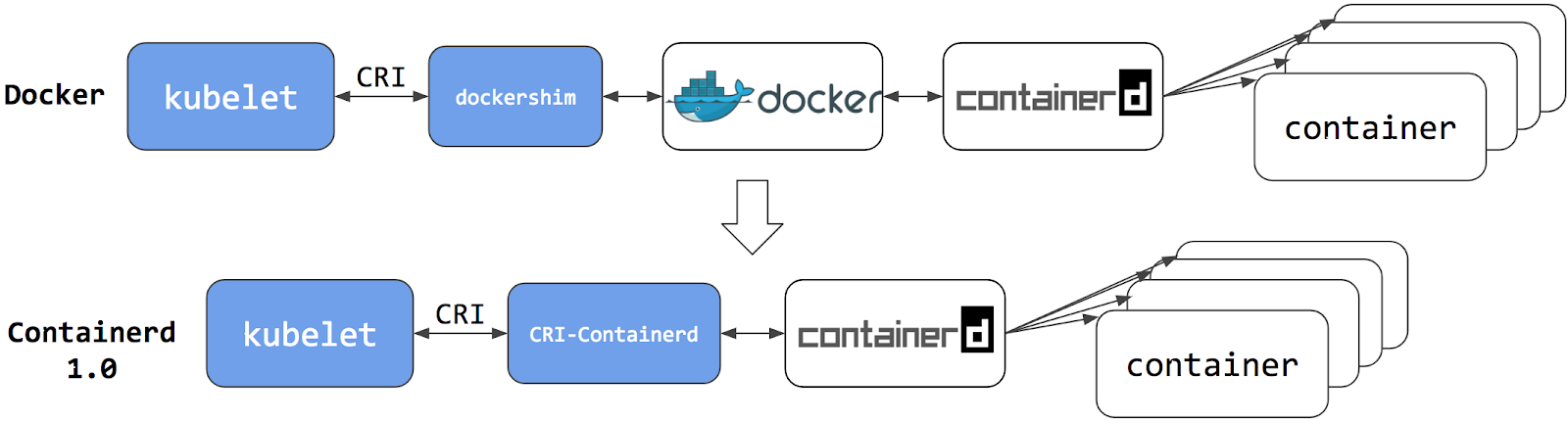

2 - Migrating from dockershim

This section presents information you need to know when migrating from dockershim to other container runtimes.

Since the announcement of dockershim deprecation in Kubernetes 1.20, there were questions on how this will affect various workloads and Kubernetes installations. Our Dockershim Removal FAQ is there to help you to understand the problem better.

It is recommended to migrate from dockershim to alternative container runtimes. Check out container runtimes section to know your options. Make sure to report issues you encountered with the migration. So the issue can be fixed in a timely manner and your cluster would be ready for dockershim removal.

2.1 - Changing the Container Runtime on a Node from Docker Engine to containerd

This task outlines the steps needed to update your container runtime to containerd from Docker. It is applicable for cluster operators running Kubernetes 1.23 or earlier. Also this covers an example scenario for migrating from dockershim to containerd and alternative container runtimes can be picked from this page.

Before you begin

Install containerd. For more information see, containerd's installation documentation and for specific prerequisite follow this.

Drain the node

# replace <node-to-drain> with the name of your node you are draining

kubectl drain <node-to-drain> --ignore-daemonsets

Stop the Docker daemon

systemctl stop kubelet

systemctl disable docker.service --now

Install Containerd

This page contains detailed steps to install containerd.

-

Install the

containerd.iopackage from the official Docker repositories. Instructions for setting up the Docker repository for your respective Linux distribution and installing thecontainerd.iopackage can be found at Install Docker Engine. -

Configure containerd:

sudo mkdir -p /etc/containerd containerd config default | sudo tee /etc/containerd/config.toml -

Restart containerd:

sudo systemctl restart containerd

Start a Powershell session, set $Version to the desired version (ex: $Version="1.4.3"), and then run the following commands:

-

Download containerd:

curl.exe -L https://github.com/containerd/containerd/releases/download/v$Version/containerd-$Version-windows-amd64.tar.gz -o containerd-windows-amd64.tar.gz tar.exe xvf .\containerd-windows-amd64.tar.gz -

Extract and configure:

Copy-Item -Path ".\bin\" -Destination "$Env:ProgramFiles\containerd" -Recurse -Force cd $Env:ProgramFiles\containerd\ .\containerd.exe config default | Out-File config.toml -Encoding ascii # Review the configuration. Depending on setup you may want to adjust: # - the sandbox_image (Kubernetes pause image) # - cni bin_dir and conf_dir locations Get-Content config.toml # (Optional - but highly recommended) Exclude containerd from Windows Defender Scans Add-MpPreference -ExclusionProcess "$Env:ProgramFiles\containerd\containerd.exe" -

Start containerd:

.\containerd.exe --register-service Start-Service containerd

Configure the kubelet to use containerd as its container runtime

Edit the file /var/lib/kubelet/kubeadm-flags.env and add the containerd runtime to the flags. --container-runtime=remote and --container-runtime-endpoint=unix:///run/containerd/containerd.sock"

For users using kubeadm should consider the following:

The kubeadm tool stores the CRI socket for each host as an annotation in the Node object for that host.

To change it you must do the following:

Execute kubectl edit no <NODE-NAME> on a machine that has the kubeadm /etc/kubernetes/admin.conf file.

This will start a text editor where you can edit the Node object.

To choose a text editor you can set the KUBE_EDITOR environment variable.

-

Change the value of

kubeadm.alpha.kubernetes.io/cri-socketfrom/var/run/dockershim.sockto the CRI socket path of your choice (for exampleunix:///run/containerd/containerd.sock).Note that new CRI socket paths must be prefixed with

unix://ideally. -

Save the changes in the text editor, which will update the Node object.

Restart the kubelet

systemctl start kubelet

Verify that the node is healthy

Run kubectl get nodes -o wide and containerd appears as the runtime for the node we just changed.

Remove Docker Engine

Finally if everything goes well remove docker

sudo yum remove docker-ce docker-ce-cli

sudo apt-get purge docker-ce docker-ce-cli

sudo dnf remove docker-ce docker-ce-cli

sudo apt-get purge docker-ce docker-ce-cli

2.2 - Find Out What Container Runtime is Used on a Node

This page outlines steps to find out what container runtime the nodes in your cluster use.

Depending on the way you run your cluster, the container runtime for the nodes may

have been pre-configured or you need to configure it. If you're using a managed

Kubernetes service, there might be vendor-specific ways to check what container runtime is

configured for the nodes. The method described on this page should work whenever

the execution of kubectl is allowed.

Before you begin

Install and configure kubectl. See Install Tools section for details.

Find out the container runtime used on a Node

Use kubectl to fetch and show node information:

kubectl get nodes -o wide

The output is similar to the following. The column CONTAINER-RUNTIME outputs

the runtime and its version.

# For dockershim

NAME STATUS VERSION CONTAINER-RUNTIME

node-1 Ready v1.16.15 docker://19.3.1

node-2 Ready v1.16.15 docker://19.3.1

node-3 Ready v1.16.15 docker://19.3.1

# For containerd

NAME STATUS VERSION CONTAINER-RUNTIME

node-1 Ready v1.19.6 containerd://1.4.1

node-2 Ready v1.19.6 containerd://1.4.1

node-3 Ready v1.19.6 containerd://1.4.1

Find out more information about container runtimes on Container Runtimes page.

2.3 - Check whether Dockershim deprecation affects you

The dockershim component of Kubernetes allows to use Docker as a Kubernetes's

container runtime.

Kubernetes' built-in dockershim component was deprecated in release v1.20.

This page explains how your cluster could be using Docker as a container runtime,

provides details on the role that dockershim plays when in use, and shows steps

you can take to check whether any workloads could be affected by dockershim deprecation.

Finding if your app has a dependencies on Docker

If you are using Docker for building your application containers, you can still run these containers on any container runtime. This use of Docker does not count as a dependency on Docker as a container runtime.

When alternative container runtime is used, executing Docker commands may either not work or yield unexpected output. This is how you can find whether you have a dependency on Docker:

- Make sure no privileged Pods execute Docker commands (like

docker ps), restart the Docker service (commands such assystemctl restart docker.service), or modify Docker-specific files such as/etc/docker/daemon.json. - Check for any private registries or image mirror settings in the Docker

configuration file (like

/etc/docker/daemon.json). Those typically need to be reconfigured for another container runtime. - Check that scripts and apps running on nodes outside of your Kubernetes

infrastructure do not execute Docker commands. It might be:

- SSH to nodes to troubleshoot;

- Node startup scripts;

- Monitoring and security agents installed on nodes directly.

- Third-party tools that perform above mentioned privileged operations. See Migrating telemetry and security agents from dockershim for more information.

- Make sure there is no indirect dependencies on dockershim behavior. This is an edge case and unlikely to affect your application. Some tooling may be configured to react to Docker-specific behaviors, for example, raise alert on specific metrics or search for a specific log message as part of troubleshooting instructions. If you have such tooling configured, test the behavior on test cluster before migration.

Dependency on Docker explained

A container runtime is software that can execute the containers that make up a Kubernetes pod. Kubernetes is responsible for orchestration and scheduling of Pods; on each node, the kubelet uses the container runtime interface as an abstraction so that you can use any compatible container runtime.

In its earliest releases, Kubernetes offered compatibility with one container runtime: Docker.

Later in the Kubernetes project's history, cluster operators wanted to adopt additional container runtimes.

The CRI was designed to allow this kind of flexibility - and the kubelet began supporting CRI. However,

because Docker existed before the CRI specification was invented, the Kubernetes project created an

adapter component, dockershim. The dockershim adapter allows the kubelet to interact with Docker as

if Docker were a CRI compatible runtime.

You can read about it in Kubernetes Containerd integration goes GA blog post.

Switching to Containerd as a container runtime eliminates the middleman. All the same containers can be run by container runtimes like Containerd as before. But now, since containers schedule directly with the container runtime, they are not visible to Docker. So any Docker tooling or fancy UI you might have used before to check on these containers is no longer available.

You cannot get container information using docker ps or docker inspect

commands. As you cannot list containers, you cannot get logs, stop containers,

or execute something inside container using docker exec.

You can still pull images or build them using docker build command. But images

built or pulled by Docker would not be visible to container runtime and

Kubernetes. They needed to be pushed to some registry to allow them to be used

by Kubernetes.

2.4 - Migrating telemetry and security agents from dockershim

Kubernetes' support for direct integration with Docker Engine is deprecated, and will be removed. Most apps do not have a direct dependency on runtime hosting containers. However, there are still a lot of telemetry and monitoring agents that has a dependency on docker to collect containers metadata, logs and metrics. This document aggregates information on how to detect these dependencies and links on how to migrate these agents to use generic tools or alternative runtimes.

Telemetry and security agents

Within a Kubernetes cluster there are a few different ways to run telemetry or security agents. Some agents have a direct dependency on Docker Engine when they as DaemonSets or directly on nodes.

Why do some telemetry agents communicate with Docker Engine?

Historically, Kubernetes was written to work specifically with Docker Engine. Kubernetes took care of networking and scheduling, relying on Docker Engine for launching and running containers (within Pods) on a node. Some information that is relevant to telemetry, such as a pod name, is only available from Kubernetes components. Other data, such as container metrics, is not the responsibility of the container runtime. Early telemetry agents needed to query the container runtime and Kubernetes to report an accurate picture. Over time, Kubernetes gained the ability to support multiple runtimes, and now supports any runtime that is compatible with the container runtime interface.

Some telemetry agents rely specifically on Docker Engine tooling. For example, an agent

might run a command such as

docker ps

or docker top to list

containers and processes or docker logs

to receive streamed logs. If nodes in your existing cluster use

Docker Engine, and you switch to a different container runtime,

these commands will not work any longer.

Identify DaemonSets that depend on Docker Engine

If a pod wants to make calls to the dockerd running on the node, the pod must either:

- mount the filesystem containing the Docker daemon's privileged socket, as a volume; or

- mount the specific path of the Docker daemon's privileged socket directly, also as a volume.

For example: on COS images, Docker exposes its Unix domain socket at

/var/run/docker.sock This means that the pod spec will include a

hostPath volume mount of /var/run/docker.sock.

Here's a sample shell script to find Pods that have a mount directly mapping the

Docker socket. This script outputs the namespace and name of the pod. You can

remove the grep '/var/run/docker.sock' to review other mounts.

kubectl get pods --all-namespaces \

-o=jsonpath='{range .items[*]}{"\n"}{.metadata.namespace}{":\t"}{.metadata.name}{":\t"}{range .spec.volumes[*]}{.hostPath.path}{", "}{end}{end}' \

| sort \

| grep '/var/run/docker.sock'

/var/run may be mounted instead of the full path (like in this

example).

The script above only detects the most common uses.

Detecting Docker dependency from node agents

In case your cluster nodes are customized and install additional security and telemetry agents on the node, make sure to check with the vendor of the agent whether it has dependency on Docker.

Telemetry and security agent vendors

We keep the work in progress version of migration instructions for various telemetry and security agent vendors in Google doc. Please contact the vendor to get up to date instructions for migrating from dockershim.

3 - Certificates

When using client certificate authentication, you can generate certificates

manually through easyrsa, openssl or cfssl.

easyrsa

easyrsa can manually generate certificates for your cluster.

-

Download, unpack, and initialize the patched version of easyrsa3.

curl -LO https://storage.googleapis.com/kubernetes-release/easy-rsa/easy-rsa.tar.gz tar xzf easy-rsa.tar.gz cd easy-rsa-master/easyrsa3 ./easyrsa init-pki -

Generate a new certificate authority (CA).

--batchsets automatic mode;--req-cnspecifies the Common Name (CN) for the CA's new root certificate../easyrsa --batch "--req-cn=${MASTER_IP}@`date +%s`" build-ca nopass -

Generate server certificate and key. The argument

--subject-alt-namesets the possible IPs and DNS names the API server will be accessed with. TheMASTER_CLUSTER_IPis usually the first IP from the service CIDR that is specified as the--service-cluster-ip-rangeargument for both the API server and the controller manager component. The argument--daysis used to set the number of days after which the certificate expires. The sample below also assumes that you are usingcluster.localas the default DNS domain name../easyrsa --subject-alt-name="IP:${MASTER_IP},"\ "IP:${MASTER_CLUSTER_IP},"\ "DNS:kubernetes,"\ "DNS:kubernetes.default,"\ "DNS:kubernetes.default.svc,"\ "DNS:kubernetes.default.svc.cluster,"\ "DNS:kubernetes.default.svc.cluster.local" \ --days=10000 \ build-server-full server nopass -

Copy

pki/ca.crt,pki/issued/server.crt, andpki/private/server.keyto your directory. -

Fill in and add the following parameters into the API server start parameters:

--client-ca-file=/yourdirectory/ca.crt --tls-cert-file=/yourdirectory/server.crt --tls-private-key-file=/yourdirectory/server.key

openssl

openssl can manually generate certificates for your cluster.

-

Generate a ca.key with 2048bit:

openssl genrsa -out ca.key 2048 -

According to the ca.key generate a ca.crt (use -days to set the certificate effective time):

openssl req -x509 -new -nodes -key ca.key -subj "/CN=${MASTER_IP}" -days 10000 -out ca.crt -

Generate a server.key with 2048bit:

openssl genrsa -out server.key 2048 -

Create a config file for generating a Certificate Signing Request (CSR). Be sure to substitute the values marked with angle brackets (e.g.

<MASTER_IP>) with real values before saving this to a file (e.g.csr.conf). Note that the value forMASTER_CLUSTER_IPis the service cluster IP for the API server as described in previous subsection. The sample below also assumes that you are usingcluster.localas the default DNS domain name.[ req ] default_bits = 2048 prompt = no default_md = sha256 req_extensions = req_ext distinguished_name = dn [ dn ] C = <country> ST = <state> L = <city> O = <organization> OU = <organization unit> CN = <MASTER_IP> [ req_ext ] subjectAltName = @alt_names [ alt_names ] DNS.1 = kubernetes DNS.2 = kubernetes.default DNS.3 = kubernetes.default.svc DNS.4 = kubernetes.default.svc.cluster DNS.5 = kubernetes.default.svc.cluster.local IP.1 = <MASTER_IP> IP.2 = <MASTER_CLUSTER_IP> [ v3_ext ] authorityKeyIdentifier=keyid,issuer:always basicConstraints=CA:FALSE keyUsage=keyEncipherment,dataEncipherment extendedKeyUsage=serverAuth,clientAuth subjectAltName=@alt_names -

Generate the certificate signing request based on the config file:

openssl req -new -key server.key -out server.csr -config csr.conf -

Generate the server certificate using the ca.key, ca.crt and server.csr:

openssl x509 -req -in server.csr -CA ca.crt -CAkey ca.key \ -CAcreateserial -out server.crt -days 10000 \ -extensions v3_ext -extfile csr.conf -

View the certificate signing request:

openssl req -noout -text -in ./server.csr -

View the certificate:

openssl x509 -noout -text -in ./server.crt

Finally, add the same parameters into the API server start parameters.

cfssl

cfssl is another tool for certificate generation.

-

Download, unpack and prepare the command line tools as shown below. Note that you may need to adapt the sample commands based on the hardware architecture and cfssl version you are using.

curl -L https://github.com/cloudflare/cfssl/releases/download/v1.5.0/cfssl_1.5.0_linux_amd64 -o cfssl chmod +x cfssl curl -L https://github.com/cloudflare/cfssl/releases/download/v1.5.0/cfssljson_1.5.0_linux_amd64 -o cfssljson chmod +x cfssljson curl -L https://github.com/cloudflare/cfssl/releases/download/v1.5.0/cfssl-certinfo_1.5.0_linux_amd64 -o cfssl-certinfo chmod +x cfssl-certinfo -

Create a directory to hold the artifacts and initialize cfssl:

mkdir cert cd cert ../cfssl print-defaults config > config.json ../cfssl print-defaults csr > csr.json -

Create a JSON config file for generating the CA file, for example,

ca-config.json:{ "signing": { "default": { "expiry": "8760h" }, "profiles": { "kubernetes": { "usages": [ "signing", "key encipherment", "server auth", "client auth" ], "expiry": "8760h" } } } } -

Create a JSON config file for CA certificate signing request (CSR), for example,

ca-csr.json. Be sure to replace the values marked with angle brackets with real values you want to use.{ "CN": "kubernetes", "key": { "algo": "rsa", "size": 2048 }, "names":[{ "C": "<country>", "ST": "<state>", "L": "<city>", "O": "<organization>", "OU": "<organization unit>" }] } -

Generate CA key (

ca-key.pem) and certificate (ca.pem):../cfssl gencert -initca ca-csr.json | ../cfssljson -bare ca -

Create a JSON config file for generating keys and certificates for the API server, for example,

server-csr.json. Be sure to replace the values in angle brackets with real values you want to use. TheMASTER_CLUSTER_IPis the service cluster IP for the API server as described in previous subsection. The sample below also assumes that you are usingcluster.localas the default DNS domain name.{ "CN": "kubernetes", "hosts": [ "127.0.0.1", "<MASTER_IP>", "<MASTER_CLUSTER_IP>", "kubernetes", "kubernetes.default", "kubernetes.default.svc", "kubernetes.default.svc.cluster", "kubernetes.default.svc.cluster.local" ], "key": { "algo": "rsa", "size": 2048 }, "names": [{ "C": "<country>", "ST": "<state>", "L": "<city>", "O": "<organization>", "OU": "<organization unit>" }] } -

Generate the key and certificate for the API server, which are by default saved into file

server-key.pemandserver.pemrespectively:../cfssl gencert -ca=ca.pem -ca-key=ca-key.pem \ --config=ca-config.json -profile=kubernetes \ server-csr.json | ../cfssljson -bare server

Distributing Self-Signed CA Certificate

A client node may refuse to recognize a self-signed CA certificate as valid. For a non-production deployment, or for a deployment that runs behind a company firewall, you can distribute a self-signed CA certificate to all clients and refresh the local list for valid certificates.

On each client, perform the following operations:

sudo cp ca.crt /usr/local/share/ca-certificates/kubernetes.crt

sudo update-ca-certificates

Updating certificates in /etc/ssl/certs...

1 added, 0 removed; done.

Running hooks in /etc/ca-certificates/update.d....

done.

Certificates API

You can use the certificates.k8s.io API to provision

x509 certificates to use for authentication as documented

here.

4 - Manage Memory, CPU, and API Resources

4.1 - Configure Default Memory Requests and Limits for a Namespace

This page shows how to configure default memory requests and limits for a namespace.

A Kubernetes cluster can be divided into namespaces. Once you have a namespace that has a default memory limit, and you then try to create a Pod with a container that does not specify its own memory limit, then the control plane assigns the default memory limit to that container.

Kubernetes assigns a default memory request under certain conditions that are explained later in this topic.

Before you begin

You need to have a Kubernetes cluster, and the kubectl command-line tool must be configured to communicate with your cluster. It is recommended to run this tutorial on a cluster with at least two nodes that are not acting as control plane hosts. If you do not already have a cluster, you can create one by using minikube or you can use one of these Kubernetes playgrounds:

You must have access to create namespaces in your cluster.

Each node in your cluster must have at least 2 GiB of memory.

Create a namespace

Create a namespace so that the resources you create in this exercise are isolated from the rest of your cluster.

kubectl create namespace default-mem-example

Create a LimitRange and a Pod

Here's a manifest for an example LimitRange. The manifest specifies a default memory request and a default memory limit.

apiVersion: v1

kind: LimitRange

metadata:

name: mem-limit-range

spec:

limits:

- default:

memory: 512Mi

defaultRequest:

memory: 256Mi

type: Container

Create the LimitRange in the default-mem-example namespace:

kubectl apply -f https://k8s.io/examples/admin/resource/memory-defaults.yaml --namespace=default-mem-example

Now if you create a Pod in the default-mem-example namespace, and any container within that Pod does not specify its own values for memory request and memory limit, then the control plane applies default values: a memory request of 256MiB and a memory limit of 512MiB.

Here's an example manifest for a Pod that has one container. The container does not specify a memory request and limit.

apiVersion: v1

kind: Pod

metadata:

name: default-mem-demo

spec:

containers:

- name: default-mem-demo-ctr

image: nginx

Create the Pod.

kubectl apply -f https://k8s.io/examples/admin/resource/memory-defaults-pod.yaml --namespace=default-mem-example

View detailed information about the Pod:

kubectl get pod default-mem-demo --output=yaml --namespace=default-mem-example

The output shows that the Pod's container has a memory request of 256 MiB and a memory limit of 512 MiB. These are the default values specified by the LimitRange.

containers:

- image: nginx

imagePullPolicy: Always

name: default-mem-demo-ctr

resources:

limits:

memory: 512Mi

requests:

memory: 256Mi

Delete your Pod:

kubectl delete pod default-mem-demo --namespace=default-mem-example

What if you specify a container's limit, but not its request?

Here's a manifest for a Pod that has one container. The container specifies a memory limit, but not a request:

apiVersion: v1

kind: Pod

metadata:

name: default-mem-demo-2

spec:

containers:

- name: default-mem-demo-2-ctr

image: nginx

resources:

limits:

memory: "1Gi"

Create the Pod:

kubectl apply -f https://k8s.io/examples/admin/resource/memory-defaults-pod-2.yaml --namespace=default-mem-example

View detailed information about the Pod:

kubectl get pod default-mem-demo-2 --output=yaml --namespace=default-mem-example

The output shows that the container's memory request is set to match its memory limit. Notice that the container was not assigned the default memory request value of 256Mi.

resources:

limits:

memory: 1Gi

requests:

memory: 1Gi

What if you specify a container's request, but not its limit?

Here's a manifest for a Pod that has one container. The container specifies a memory request, but not a limit:

apiVersion: v1

kind: Pod

metadata:

name: default-mem-demo-3

spec:

containers:

- name: default-mem-demo-3-ctr

image: nginx

resources:

requests:

memory: "128Mi"

Create the Pod:

kubectl apply -f https://k8s.io/examples/admin/resource/memory-defaults-pod-3.yaml --namespace=default-mem-example

View the Pod's specification:

kubectl get pod default-mem-demo-3 --output=yaml --namespace=default-mem-example

The output shows that the container's memory request is set to the value specified in the container's manifest. The container is limited to use no more than 512MiB of memory, which matches the default memory limit for the namespace.

resources:

limits:

memory: 512Mi

requests:

memory: 128Mi

Motivation for default memory limits and requests

If your namespace has a memory resource quota configured, it is helpful to have a default value in place for memory limit. Here are two of the restrictions that a resource quota imposes on a namespace:

- For every Pod that runs in the namespace, the Pod and each of its containers must have a memory limit. (If you specify a memory limit for every container in a Pod, Kubernetes can infer the Pod-level memory limit by adding up the limits for its containers).

- Memory limits apply a resource reservation on the node where the Pod in question is scheduled. The total amount of memory reserved for all Pods in the namespace must not exceed a specified limit.

- The total amount of memory actually used by all Pods in the namespace must also not exceed a specified limit.

When you add a LimitRange:

If any Pod in that namespace that includes a container does not specify its own memory limit, the control plane applies the default memory limit to that container, and the Pod can be allowed to run in a namespace that is restricted by a memory ResourceQuota.

Clean up

Delete your namespace:

kubectl delete namespace default-mem-example

What's next

For cluster administrators

-

Configure Minimum and Maximum Memory Constraints for a Namespace

-

Configure Minimum and Maximum CPU Constraints for a Namespace

For app developers

4.2 - Configure Default CPU Requests and Limits for a Namespace

This page shows how to configure default CPU requests and limits for a namespace.

A Kubernetes cluster can be divided into namespaces. If you create a Pod within a namespace that has a default CPU limit, and any container in that Pod does not specify its own CPU limit, then the control plane assigns the default CPU limit to that container.

Kubernetes assigns a default CPU request, but only under certain conditions that are explained later in this page.

Before you begin

You need to have a Kubernetes cluster, and the kubectl command-line tool must be configured to communicate with your cluster. It is recommended to run this tutorial on a cluster with at least two nodes that are not acting as control plane hosts. If you do not already have a cluster, you can create one by using minikube or you can use one of these Kubernetes playgrounds:

You must have access to create namespaces in your cluster.

If you're not already familiar with what Kubernetes means by 1.0 CPU, read meaning of CPU.

Create a namespace

Create a namespace so that the resources you create in this exercise are isolated from the rest of your cluster.

kubectl create namespace default-cpu-example

Create a LimitRange and a Pod

Here's a manifest for an example LimitRange. The manifest specifies a default CPU request and a default CPU limit.

apiVersion: v1

kind: LimitRange

metadata:

name: cpu-limit-range

spec:

limits:

- default:

cpu: 1

defaultRequest:

cpu: 0.5

type: Container

Create the LimitRange in the default-cpu-example namespace:

kubectl apply -f https://k8s.io/examples/admin/resource/cpu-defaults.yaml --namespace=default-cpu-example

Now if you create a Pod in the default-cpu-example namespace, and any container in that Pod does not specify its own values for CPU request and CPU limit, then the control plane applies default values: a CPU request of 0.5 and a default CPU limit of 1.

Here's a manifest for a Pod that has one container. The container does not specify a CPU request and limit.

apiVersion: v1

kind: Pod

metadata:

name: default-cpu-demo

spec:

containers:

- name: default-cpu-demo-ctr

image: nginx

Create the Pod.

kubectl apply -f https://k8s.io/examples/admin/resource/cpu-defaults-pod.yaml --namespace=default-cpu-example

View the Pod's specification:

kubectl get pod default-cpu-demo --output=yaml --namespace=default-cpu-example

The output shows that the Pod's only container has a CPU request of 500m cpu

(which you can read as “500 millicpu”), and a CPU limit of 1 cpu.

These are the default values specified by the LimitRange.

containers:

- image: nginx

imagePullPolicy: Always

name: default-cpu-demo-ctr

resources:

limits:

cpu: "1"

requests:

cpu: 500m

What if you specify a container's limit, but not its request?

Here's a manifest for a Pod that has one container. The container specifies a CPU limit, but not a request:

apiVersion: v1

kind: Pod

metadata:

name: default-cpu-demo-2

spec:

containers:

- name: default-cpu-demo-2-ctr

image: nginx

resources:

limits:

cpu: "1"

Create the Pod:

kubectl apply -f https://k8s.io/examples/admin/resource/cpu-defaults-pod-2.yaml --namespace=default-cpu-example

View the specification of the Pod that you created:

kubectl get pod default-cpu-demo-2 --output=yaml --namespace=default-cpu-example

The output shows that the container's CPU request is set to match its CPU limit.

Notice that the container was not assigned the default CPU request value of 0.5 cpu:

resources:

limits:

cpu: "1"

requests:

cpu: "1"

What if you specify a container's request, but not its limit?

Here's an example manifest for a Pod that has one container. The container specifies a CPU request, but not a limit:

apiVersion: v1

kind: Pod

metadata:

name: default-cpu-demo-3

spec:

containers:

- name: default-cpu-demo-3-ctr

image: nginx

resources:

requests:

cpu: "0.75"

Create the Pod:

kubectl apply -f https://k8s.io/examples/admin/resource/cpu-defaults-pod-3.yaml --namespace=default-cpu-example

View the specification of the Pod that you created:

kubectl get pod default-cpu-demo-3 --output=yaml --namespace=default-cpu-example

The output shows that the container's CPU request is set to the value you specified at

the time you created the Pod (in other words: it matches the manifest).

However, the same container's CPU limit is set to 1 cpu, which is the default CPU limit

for that namespace.

resources:

limits:

cpu: "1"

requests:

cpu: 750m

Motivation for default CPU limits and requests

If your namespace has a CPU resource quota configured, it is helpful to have a default value in place for CPU limit. Here are two of the restrictions that a CPU resource quota imposes on a namespace:

- For every Pod that runs in the namespace, each of its containers must have a CPU limit.

- CPU limits apply a resource reservation on the node where the Pod in question is scheduled. The total amount of CPU that is reserved for use by all Pods in the namespace must not exceed a specified limit.

When you add a LimitRange:

If any Pod in that namespace that includes a container does not specify its own CPU limit, the control plane applies the default CPU limit to that container, and the Pod can be allowed to run in a namespace that is restricted by a CPU ResourceQuota.

Clean up

Delete your namespace:

kubectl delete namespace default-cpu-example

What's next

For cluster administrators

-

Configure Default Memory Requests and Limits for a Namespace

-

Configure Minimum and Maximum Memory Constraints for a Namespace

-

Configure Minimum and Maximum CPU Constraints for a Namespace

For app developers

4.3 - Configure Minimum and Maximum Memory Constraints for a Namespace

This page shows how to set minimum and maximum values for memory used by containers running in a namespace. You specify minimum and maximum memory values in a LimitRange object. If a Pod does not meet the constraints imposed by the LimitRange, it cannot be created in the namespace.

Before you begin

You need to have a Kubernetes cluster, and the kubectl command-line tool must be configured to communicate with your cluster. It is recommended to run this tutorial on a cluster with at least two nodes that are not acting as control plane hosts. If you do not already have a cluster, you can create one by using minikube or you can use one of these Kubernetes playgrounds:

You must have access to create namespaces in your cluster.

Each node in your cluster must have at least 1 GiB of memory available for Pods.

Create a namespace

Create a namespace so that the resources you create in this exercise are isolated from the rest of your cluster.

kubectl create namespace constraints-mem-example

Create a LimitRange and a Pod

Here's an example manifest for a LimitRange:

apiVersion: v1

kind: LimitRange

metadata:

name: mem-min-max-demo-lr

spec:

limits:

- max:

memory: 1Gi

min:

memory: 500Mi

type: Container

Create the LimitRange:

kubectl apply -f https://k8s.io/examples/admin/resource/memory-constraints.yaml --namespace=constraints-mem-example

View detailed information about the LimitRange:

kubectl get limitrange mem-min-max-demo-lr --namespace=constraints-mem-example --output=yaml

The output shows the minimum and maximum memory constraints as expected. But notice that even though you didn't specify default values in the configuration file for the LimitRange, they were created automatically.

limits:

- default:

memory: 1Gi

defaultRequest:

memory: 1Gi

max:

memory: 1Gi

min:

memory: 500Mi

type: Container

Now whenever you define a Pod within the constraints-mem-example namespace, Kubernetes performs these steps:

-

If any container in that Pod does not specify its own memory request and limit, assign the default memory request and limit to that container.

-

Verify that every container in that Pod requests at least 500 MiB of memory.

-

Verify that every container in that Pod requests no more than 1024 MiB (1 GiB) of memory.

Here's a manifest for a Pod that has one container. Within the Pod spec, the sole container specifies a memory request of 600 MiB and a memory limit of 800 MiB. These satisfy the minimum and maximum memory constraints imposed by the LimitRange.

apiVersion: v1

kind: Pod

metadata:

name: constraints-mem-demo

spec:

containers:

- name: constraints-mem-demo-ctr

image: nginx

resources:

limits:

memory: "800Mi"

requests:

memory: "600Mi"

Create the Pod:

kubectl apply -f https://k8s.io/examples/admin/resource/memory-constraints-pod.yaml --namespace=constraints-mem-example

Verify that the Pod is running and that its container is healthy:

kubectl get pod constraints-mem-demo --namespace=constraints-mem-example

View detailed information about the Pod:

kubectl get pod constraints-mem-demo --output=yaml --namespace=constraints-mem-example

The output shows that the container within that Pod has a memory request of 600 MiB and a memory limit of 800 MiB. These satisfy the constraints imposed by the LimitRange for this namespace:

resources:

limits:

memory: 800Mi

requests:

memory: 600Mi

Delete your Pod:

kubectl delete pod constraints-mem-demo --namespace=constraints-mem-example

Attempt to create a Pod that exceeds the maximum memory constraint

Here's a manifest for a Pod that has one container. The container specifies a memory request of 800 MiB and a memory limit of 1.5 GiB.

apiVersion: v1

kind: Pod

metadata:

name: constraints-mem-demo-2

spec:

containers: